Therapy is no longer confined to a consulting room. For many UK adults managing anxiety or depression, support is now available through AI-powered tools integrated directly into mainstream care pathways. AI chatbots like Wysa and Limbic are already used within NHS Talking Therapies, offering structured support between sessions or while waiting for a human therapist. This guide examines what these tools offer, how effective they are, where their limits lie, and how they fit within broader online therapy services available to you in the UK.

Table of Contents

- How AI tools have changed therapy access in the UK

- Comparing AI to traditional therapy methods

- What the research says: AI therapy outcomes and risks

- Professional standards and safeguards for safe AI therapy

- Making informed choices: finding the right AI or blended therapy option

- Explore safer, supported therapy with expert-guided AI

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI increases access | AI chatbots help more UK adults get timely support for anxiety and depression. |

| Used best as a supplement | AI should enhance, not replace, human mental health support and is not for crisis situations. |

| Research shows benefits and limits | AI therapy boosts engagement and recovery for some, but carries risks and must be used with professional oversight. |

| Choose safe platforms | Opt for services that clearly explain their AI use, safety processes, and data privacy standards. |

How AI tools have changed therapy access in the UK

Access to mental health support has long been a challenge in the UK. Long waiting lists, limited appointment times, and geographical barriers have left many people without timely help. AI tools are changing that picture in practical, measurable ways.

Platforms such as Wysa and Limbic are now integrated into NHS Talking Therapies, providing structured support at scale. These tools work by guiding users through self-referral processes, managing triage, reducing administrative burden on clinicians, and delivering cognitive behavioural therapy (CBT) exercises at any hour. CBT is a structured, evidence-based approach that helps you identify and change unhelpful thought patterns.

The accessibility benefits are significant. AI tools are available 24 hours a day, seven days a week, which means support is not limited to office hours. For mild to moderate anxiety and depression, this kind of always-on availability can fill critical NHS gaps that would otherwise leave people without any structured support for weeks or months.

Key functions these tools currently provide include:

- Self-referral guidance and triage support

- Waitlist management and appointment preparation

- CBT-based exercises and mood tracking

- Psychoeducation resources available on demand

- Progress monitoring between human therapy sessions

If you are exploring your options, reviewing the available therapy options for anxiety can help you understand where AI tools fit within a broader care plan.

Comparing AI to traditional therapy methods

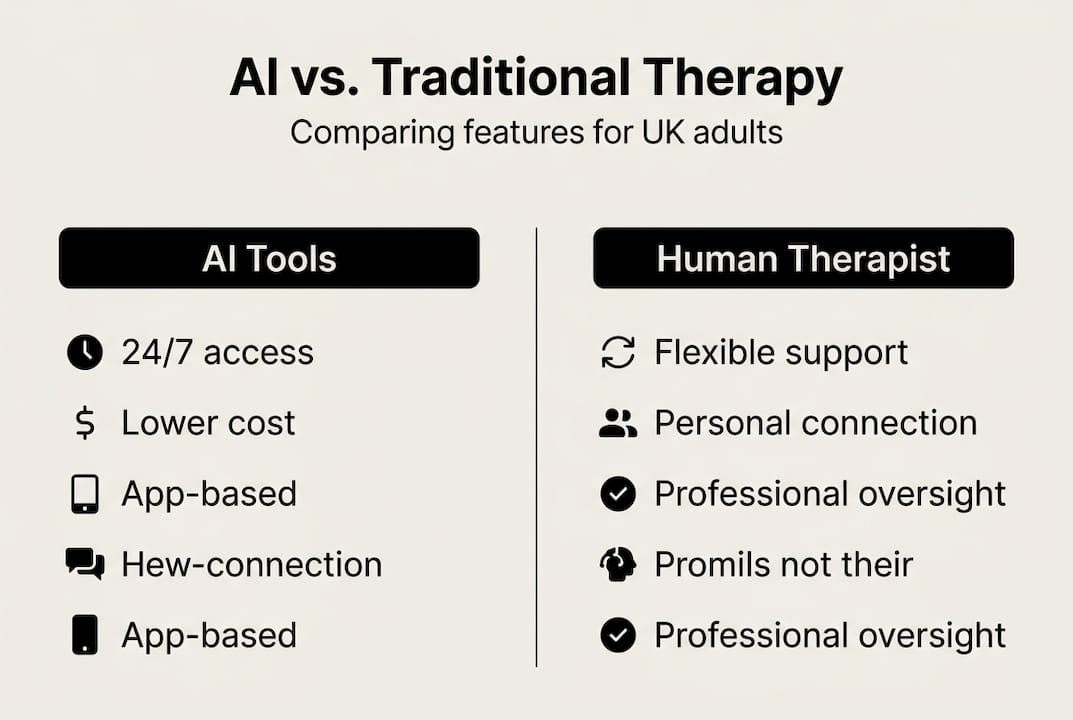

Understanding the differences between AI-enabled therapy and traditional human-led therapy helps you make informed decisions about your care.

| Feature | AI therapy tools | Human therapist || |---|---|---| | Availability | 24/7 | Scheduled appointments | | Cost per user | As low as £5.90 | £50 to £100+ per session | | Emotional depth | Limited | High | | Crisis response | Not suitable | Trained and equipped | | Personalisation | Algorithm-based | Relational and adaptive | | Engagement (CBT) | High | Varies by format |

Research from randomised controlled trials shows that AI CBT increases engagement by 2.4 to 3.8 times compared to standard waiting-list conditions, with moderate reductions in symptoms. That is a meaningful result. However, the British Psychological Society notes that AI CBT matches text-based human therapy in some measures but lacks the relational depth that many people find essential for lasting change.

The relational component matters. A human therapist can read tone, adapt in real time, and hold space for complex emotional experiences in ways that no algorithm currently replicates. For straightforward or mild presentations, AI tools perform well. For complex trauma, grief, or long-standing mental health conditions, human involvement remains essential.

Pro Tip: Use AI therapy tools as a supplement to human-led sessions, not as a standalone solution for complex or ongoing mental health concerns. Pairing both approaches tends to produce better outcomes than either alone.

When you are ready to find a qualified professional, therapist matching online can help you identify the right fit based on your specific needs and preferences. You can also review a complete guide to therapy sessions for anxiety and depression to understand what to expect.

What the research says: AI therapy outcomes and risks

The evidence base for AI therapy is growing, and the results are encouraging for specific use cases. Wysa achieved reliable change in anxiety (36%) and depression (27%) among users, while Limbic increased referral completion rates by 30%. These are clinically meaningful figures, particularly for people waiting for a human therapist.

| Outcome measure | Wysa | Limbic |

|---|---|---|

| Reliable change in anxiety | 36% | Not reported |

| Reliable change in depression | 27% | Not reported |

| Referral completion increase | Not reported | 30% |

| Engagement increase vs waiting list | 2.4 to 3.8x | Significant |

However, the risks are equally important to understand. The BPS has confirmed that AI is unsuitable for crises, severe symptoms, medication queries, or situations involving suicidality. There is also documented risk of dependency on AI reassurance, potential for inaccurate responses (sometimes called hallucinations), and the possibility that poorly managed AI interactions could worsen anxiety in vulnerable users.

To use AI therapy tools safely, follow these steps:

- Confirm the platform has clear human oversight and escalation protocols.

- Save crisis contact details such as Samaritans (116 123) before starting any digital tool.

- Use AI tools for structured exercises, not for processing acute distress.

- Review your progress regularly and share it with a human therapist where possible.

- Stop using any tool that increases your distress and seek human support instead.

For a broader view of how to stay safe when accessing support online, the online therapy safety guide provides clear, practical information specific to UK adults.

Professional standards and safeguards for safe AI therapy

In the UK, the National Institute for Health and Care Excellence (NICE) and the British Psychological Society (BPS) both play a role in shaping how AI is used ethically within mental health services. NICE updates standards for AI use in healthcare, emphasising that these tools should supplement, not replace, human clinical judgement.

Ethical and privacy concerns with AI therapy are taken seriously at a regulatory level. Any reputable platform should be transparent about how your data is stored, who can access it, and how AI outputs are reviewed by qualified staff.

When assessing whether a platform is trustworthy, look for the following:

- Clear information about data storage and privacy practices

- Registration with a recognised professional body such as BACP, UKCP, or NCPS

- Documented human oversight of AI interactions

- Explicit crisis protocols and signposting to emergency services

- Transparent explanation of how AI tools are used within the service

Pro Tip: Always choose platforms that clearly explain how their AI is supervised and how your personal data is protected. If this information is not easy to find, treat that as a warning sign.

For practical guidance on getting started, the step-by-step online therapy access guide walks you through the process clearly. You can also check therapist registration standards to verify that any professional you work with meets UK requirements.

Making informed choices: finding the right AI or blended therapy option

Choosing between AI-only, blended, or fully human-led therapy depends on your current needs, the severity of your symptoms, and your personal preferences. There is no single correct answer, but there are clear indicators to guide your decision.

AI support is best used as a supplement or first-aid measure, not as the sole solution for mental health challenges. With that principle in mind, consider the following when assessing your options:

- Are your symptoms mild to moderate, or do they significantly affect daily functioning?

- Do you have access to a human therapist, or are you currently on a waiting list?

- Are you comfortable using digital tools, and do you have reliable internet access?

- Does the platform you are considering have clear crisis protocols?

- Can you easily switch to human-led support if your needs change?

Monitoring your progress is essential. Keep a record of your mood, sleep, and anxiety levels over time. Many platforms include built-in tracking tools, but a simple journal works equally well. If your symptoms worsen or you feel the AI tool is not meeting your needs, move to human support without delay.

Flexibility matters. The best platforms allow you to adjust your approach as your needs evolve, whether that means increasing session frequency, switching therapists, or moving between AI and human-led formats. For further reading on self-directed support, the self-help therapy guide offers structured, evidence-informed options you can use alongside any therapy format.

Explore safer, supported therapy with expert-guided AI

If this guide has helped clarify what AI therapy can and cannot offer, the next step is finding a service that combines both effectively. MySafeTherapy provides access to AI-enhanced tools and human-led therapy, individually or in combination, with professional oversight built in from the start.

You can start therapy today with a straightforward process that connects you to UK-accredited therapists registered with BACP, UKCP, or NCPS. Sessions are available via video, chat, or avatar-based formats, including evenings and weekends. If you are unsure which type of support is right for you, the therapy suitability quiz provides a quick, confidential assessment to guide your next step. All data is handled with strict privacy standards, and human oversight is maintained throughout every stage of your care.

Frequently asked questions

Can AI therapy fully replace traditional therapy with a human?

No. AI tools lack relational depth and are not designed to replace the nuanced judgement and emotional attunement that a qualified human therapist provides. They work best as a supplement to human-led care.

Are AI chatbots like Wysa or Limbic safe for people in a mental health crisis?

No. AI chatbots are not suitable for crises, severe symptoms, or situations involving suicidality. Anyone in acute distress should contact a human professional or emergency service immediately.

How effective is AI in improving symptoms of anxiety and depression?

AI-guided CBT increases engagement and produces moderate reductions in anxiety and depression symptoms, particularly for mild to moderate presentations. Results vary depending on the individual and the platform used.

What safeguards exist for data privacy when using AI therapy?

Reputable platforms follow NICE and BPS standards, requiring transparent privacy practices, clear data handling policies, and documented human oversight of AI interactions.